AI makes it easy to delegate not just the work, but our engagement with our work. It's not cruise control. We need to drive stick.

Architecture, error handling, data models. They don't reason about themselves. We're supposed to do that.

AI is an incredible force multiplier, but it multiplies whatever we bring to the table. Bring nothing, get nothing worth keeping.

The Autopilot Trap

It's tempting to hand a prompt to an AI agent and walk away. "Build me an auth system." "Refactor this module." The YouTube shorts make it look effortless: type a sentence, get a working app. Ship it.

But that's interpassivity: delegating not just the labor, but the thinking. The agent makes decisions about our data model, our error boundaries, our API surface, and we inherit all of them sight unseen. The code might compile. It might even work. But nobody on the team understands why it's shaped the way it is, because nobody was in the room when the decisions got made.

I've done this. I've kicked off an agent, context-switched to something else, and come back to a clean green build that I couldn't explain. It felt productive in the moment. Two sprints later, when I needed to extend that code, I was starting from zero. No context, no rationale, no memory of decisions I'd outsourced to a machine. Just code that appeared one day.

Plan First, Then Delegate

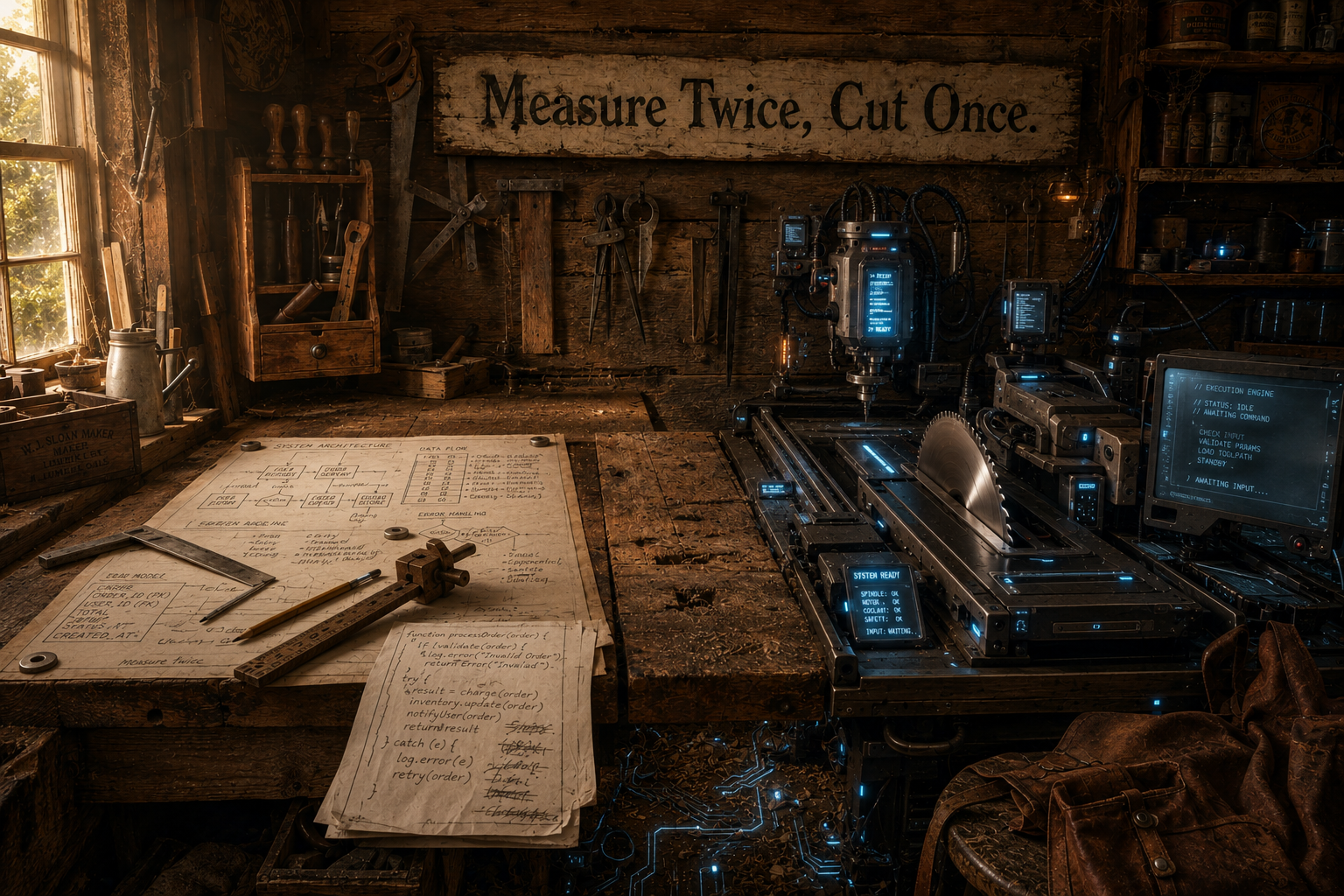

There's a saying with roots in both the British Army and the US Marine Corps: Proper Prior Planning Prevents Piss-Poor Performance. The 7 P's. Measure twice, cut once.

Planning is the single most valuable thing we can do with our time. That's not an AI insight. It's a leadership insight. Whether we're managing a team of engineers, outsourcing to a vendor, or delegating to an agent, the pattern is the same: communicated intent, clear context, guardrails, and a general direction to head in. That investment pays enormous dividends. Skip it, and we spend ten times the effort correcting course after the fact.

This isn't about bikeshedding. Nobody needs a three-week architecture review for a config change. It's about just enough planning to be an effective leader of the team doing the work, whether that team is five humans or five agents.

Cate's plan mode is built around this idea. Before a single line of code gets written, an agent researches the problem, proposes an approach, and decomposes it into discrete, trackable issues. Not a text file on someone's desktop. Real issues, in Jira, GitHub, or Linear, visible to every engineer, PM, and stakeholder on the team. Each issue captures scope, acceptance criteria, and architectural context. The plan becomes a shared artifact, not a private one. The team can review the approach, push back on design decisions, and course-correct before implementation begins, when changes are cheap.

Decisions That Outlive the Sprint

Planning is half the story. The other half is what happens after the code ships.

When a Cate agent finishes work, it doesn't just close the ticket. It writes an after-action report: what worked, what didn't, key decisions and the reasoning behind them. These get captured as comments on the PR, where reviewers see them immediately, and as research notes in the project's shared knowledge base, where future agents and future engineers can find them.

This is institutional memory that compounds over time. The next agent picking up related work doesn't start cold. It reads prior art and makes better decisions because of it. So does the engineer who joins the team six months from now.

Pull requests become more than diffs. They carry context: why this approach, what was considered, where the tradeoffs landed. Code review shifts from "does this look right?" to "do I agree with these decisions?" That's a much higher-leverage conversation.

Stay Involved

I'm not perfect: I still drop this ball. Sometimes an agent picks up a task, the PR comes through, and the temptation is to glance at the green CI badge and hit merge. Every time I've given in to that, I've paid for it later. A data model that doesn't quite fit the domain. An abstraction that made sense to the agent but surprises every human who reads it.

The structure helps. Plans, issues, research notes, after-action reports. They create natural checkpoints, moments where it's easy to stay engaged instead of having to force ourselves back in. But structure without engagement is just bureaucracy.

The developers and teams who get the most from AI won't be the ones who prompt the hardest. They'll be the ones who stay engaged, who treat AI agents as capable collaborators that still need direction, review, and judgment.

Let's add "just enough" planning to your AI workflow. Request early access to Cate, or get in touch. We'd love to hear how your team works.