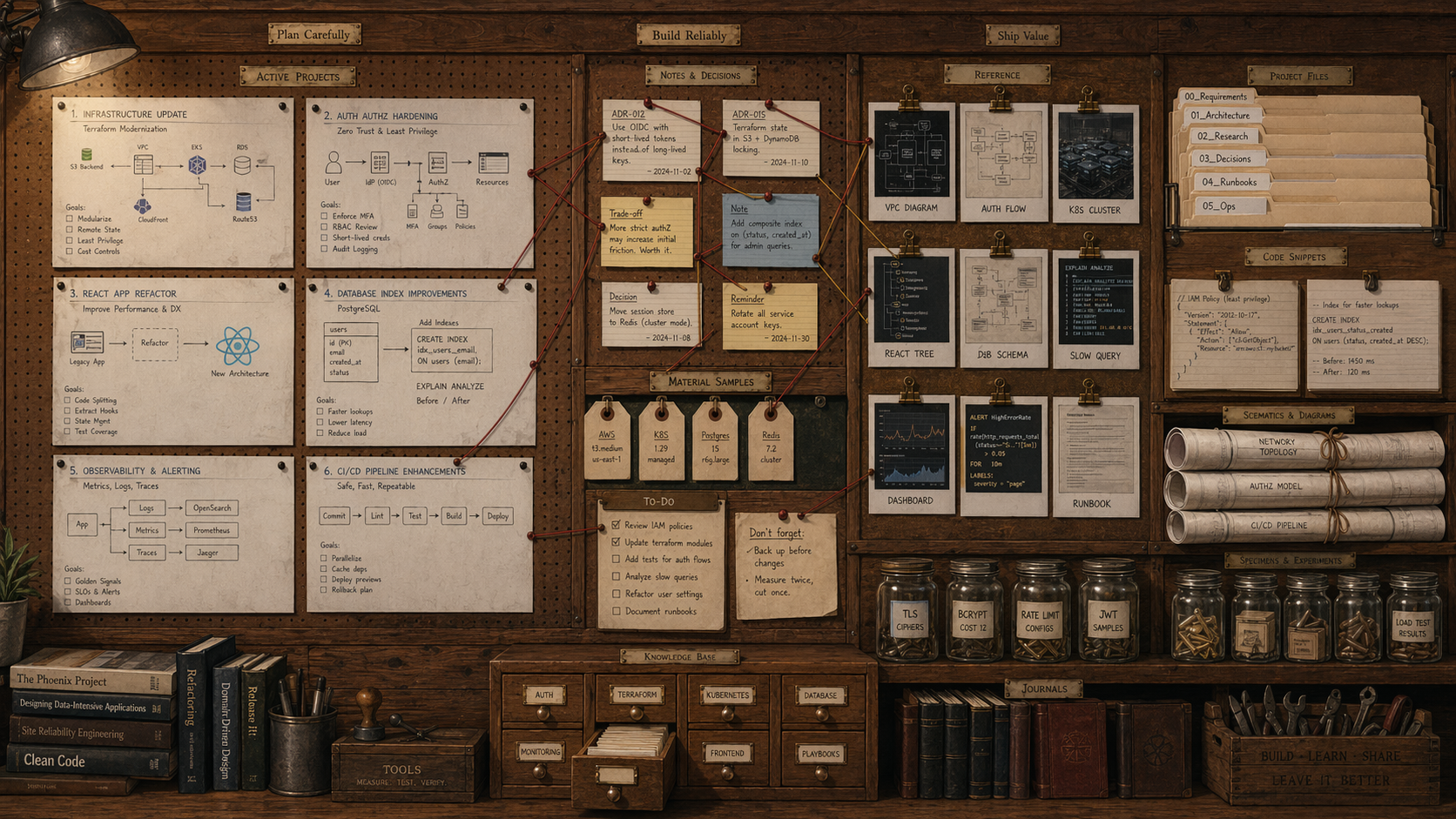

Teams that ship build more than software. They build a shared body of knowledge that evolves over time: the culture, the communication, what worked, what didn't, what the customer really wanted.

That's compound context. And it's what's missing from most AI coding setups.

The disposable session problem

Right now, most AI coding sessions are throwaway conversations. A Claude writes some code, you close the tab, and everything it learned vanishes. The next session starts cold. No memory of what was tried, what failed, or what the team decided last week.

It's like hiring a contractor who does solid work but shreds their notes at the end of every day. Tomorrow, you explain the same things all over again.

Cate works in the open — with the tools you already use

Cate doesn't trap context in your laptop. Its Claudes capture everything in the places your team already looks: Jira, Linear, GitHub.

- Specs filed as real issues

- Key decisions documented in issue comments and handoff notes

- PR feedback preserved in review threads

- Diagrams and plans attached to issues for anyone to reference

- What worked and what didn't captured in structured handoff comments on every status transition

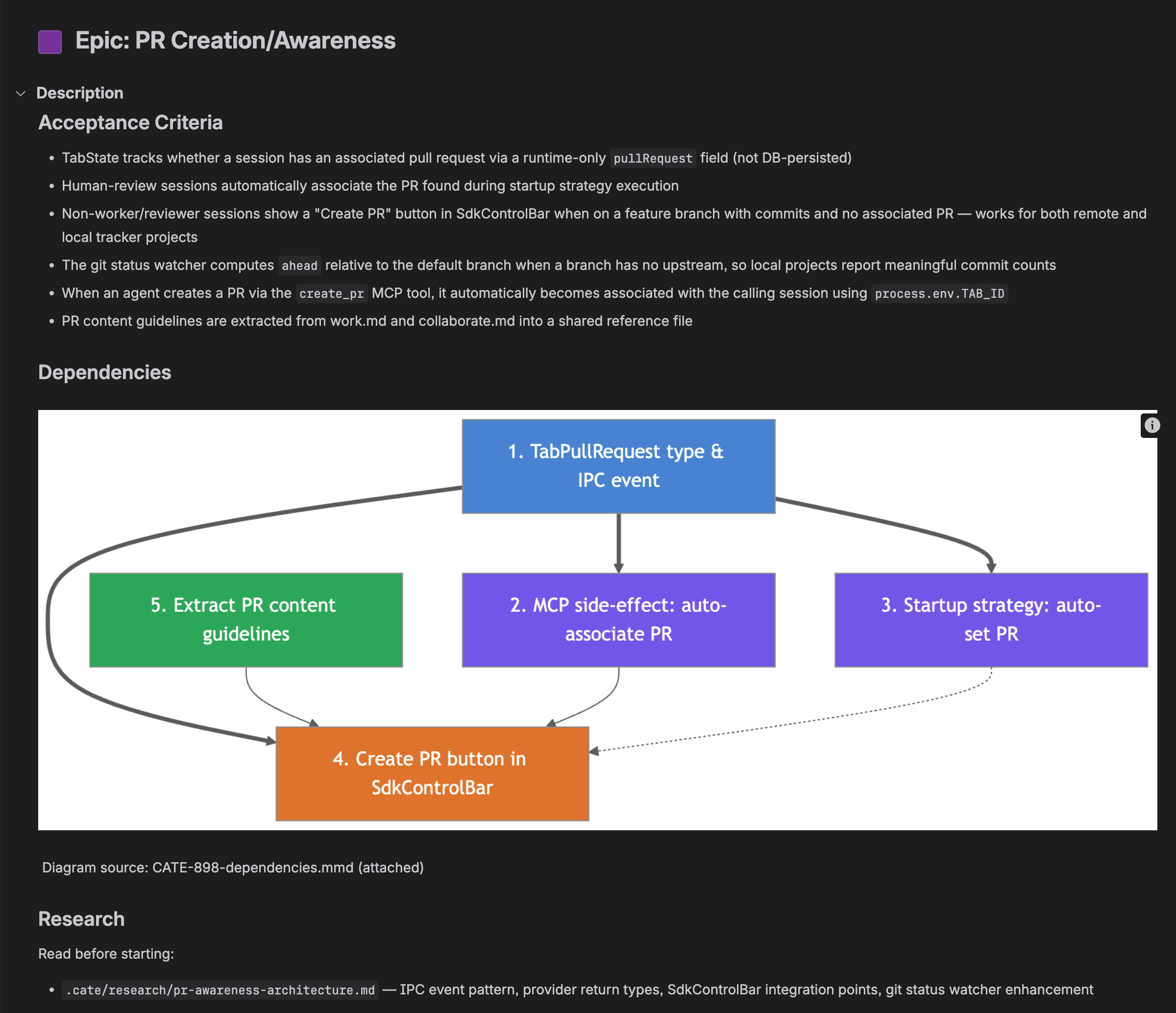

Here's what a planner-written spec looks like in Jira for a real Cate feature:

That's not a chat log buried in someone's terminal. It's a searchable, auditable artifact in your team's existing workflow. Other humans read it. Other sessions read it. Everyone builds on it.

The .cate/research directory

Some knowledge doesn't belong in an issue. It's bigger than a single task. Architecture decisions. Integration quirks. Domain concepts that every session needs to understand.

That's what .cate/research is for. Planners contribute tagged,

indexed research files to this directory as they work. A planner investigating

your authentication flow might write up how OAuth tokens are refreshed, what

edge cases exist, and which endpoints are rate-limited. That research gets

tagged and indexed in .cate/research/INDEX.md, so every future

session (planner, coder, or reviewer) can find it instantly.

Over time, this directory becomes your project's institutional memory. Not documentation you have to maintain. Living knowledge that sessions create and consume as a natural part of doing the work.

Positive feedback loops

Compound context doesn't just prevent repeated mistakes. It creates positive feedback loops. Each agent role gets better because it learns from the others:

- Planners can write better specs if they read what reviewers flagged on previous PRs. A reviewer keeps catching missing error handling? The next planner can account for it in the spec.

- Coders can ramp faster if they read the research directory before touching unfamiliar code. A coder picking up a payments task might find the planner's research on your Stripe integration already waiting.

- Reviewers can get sharper when they see the full history: the spec, the planner's rationale, the coder's commit messages. They review against intent, not just syntax.

- Sub-agents can inherit context. When a planner spawns a sub-agent to validate a spec, it can pass along the collective context. The sub-agent doesn't have to start cold.

- New team members onboard from the record. When a human joins the project, the issue history, research directory, and handoff comments tell the story of every decision. No tribal knowledge required.

- Guardrails evolve from real feedback. When reviewers consistently flag the same class of issue, you capture it as a guardrail. The pattern goes from “reviewer catches it” to “automated gate prevents it.”

- Cross-project learning. Research files are committed to your repo. Fork a project or start a similar one, and the institutional knowledge comes along for the ride.

Better every loop

It's table stakes to get an AI to write code. The harder question is: was it any good? And did the AI work as a team player?

Cate's answer is that every session leaves the project smarter than it found it. Specs, decisions, research, feedback — all searchable, all auditable, full history. Not locked in a chat window. Not lost when the session ends. Out in the open, in the tools you already use.

That's compound context. And over time, it's the difference between an AI that writes code and a team that ships software.

Ready to see compound context in action? Request early access to Cate, or get in touch — we'd love to hear what you're building.